Apple’s Augmented Reality Systems for iOS Include “X-Ray Vision” Mode

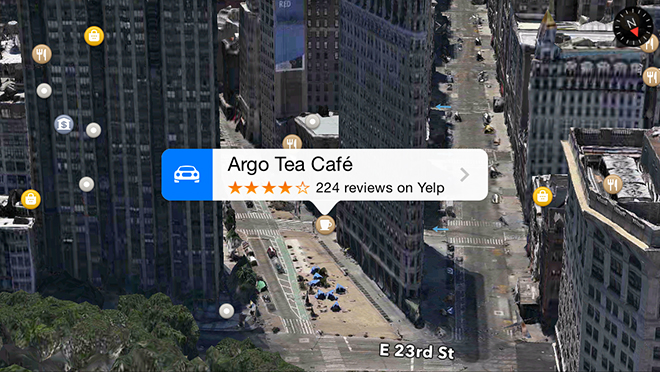

The U.S. Patent and Trademark Office has today published two new Apple patent applications titled ‘Federated mobile device positioning’ and ‘Registration between actual mobile device position and environmental model’, which detail an augmented reality navigation solution for iOS capable of providing users with “enhanced virtual overlays of their surroundings” including an “X-ray vision” mode, AppleInsider reports.

Apple’s proposed system uses GPS, Wi-Fi signal strength and sensor data to determine a user’s location. The app then downloads a three-dimensional model of the surrounding area, complete with wireframes and image data for nearby buildings and POIs. Then to accurately place the model, Apple proposes the virtual frame be “overlaid atop live video fed by an iPhone’s camera”. Apple notes that users can align the 3D asset with the live feed by manipulating it onscreen through gestures.

“In addition to user input, the device can compensate for pitch, yaw and roll, as well as other movements, to estimate positions in space as associated with the live world view. The “X-ray vision” feature was not fully detailed in either document aside from a note saying imaging assets for building interiors are to be stored on offsite servers. It can be assumed, however, that a vast database of rich data would be needed to properly coordinate the photos with their real life counterparts.”

Apple’s augmented reality patent filings were first applied for in March 2013