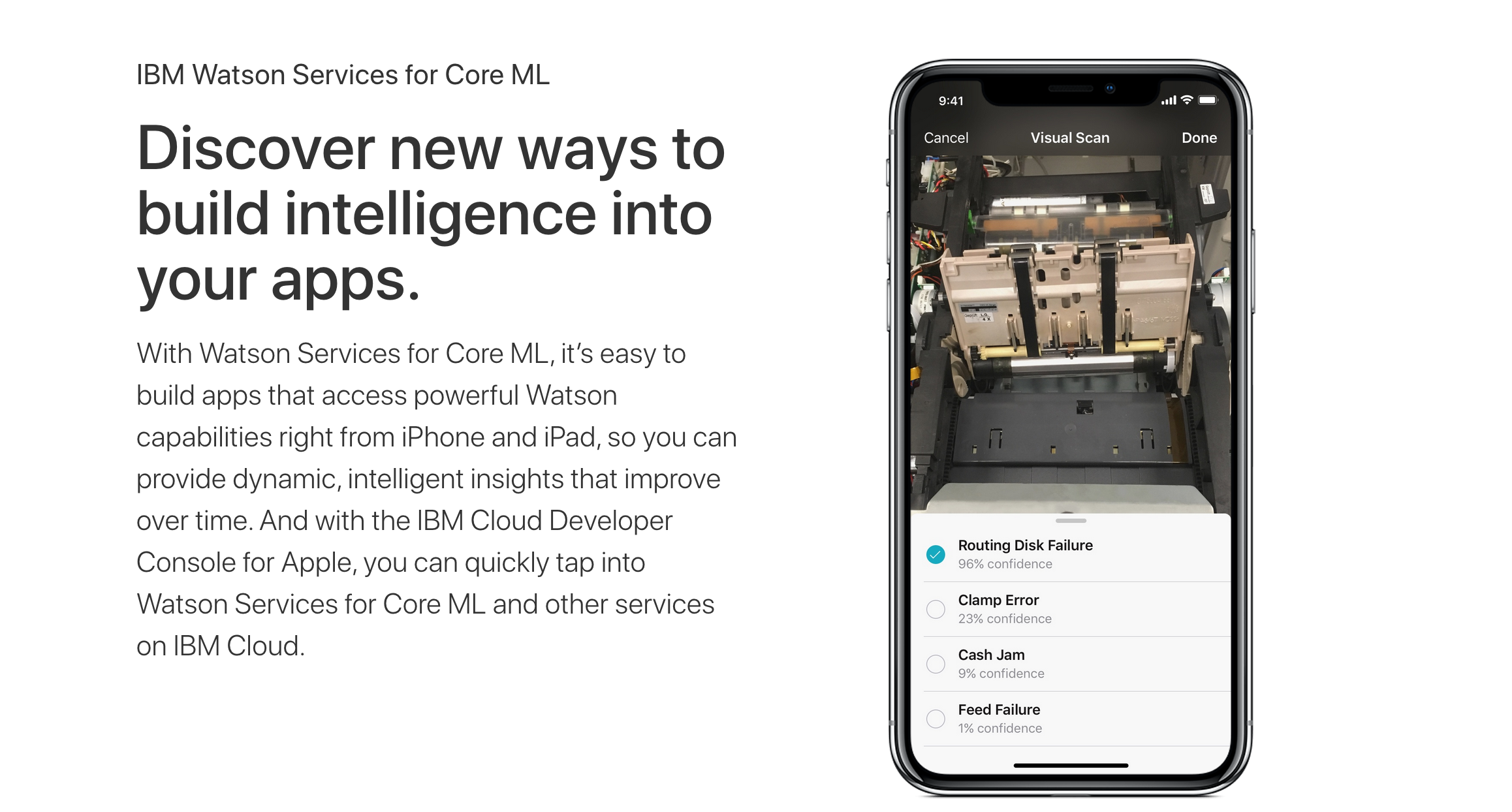

Apple Partners with IBM on Watson Service Integration via Core ML Framework

On Monday, Apple and IBM announced an expansion of their existing partnership which will allow developers to roll out advanced machine learning capabilities in their apps.

The project, called Watson Services for Core ML, lets employees analyze images, classify video contents, and train machine learning models using Watson Service backed by Apple’s own machine learning framework. For instance, an enterprise app might be trained to distinguish between a broken appliance from a functional appliance by using the device’s camera.

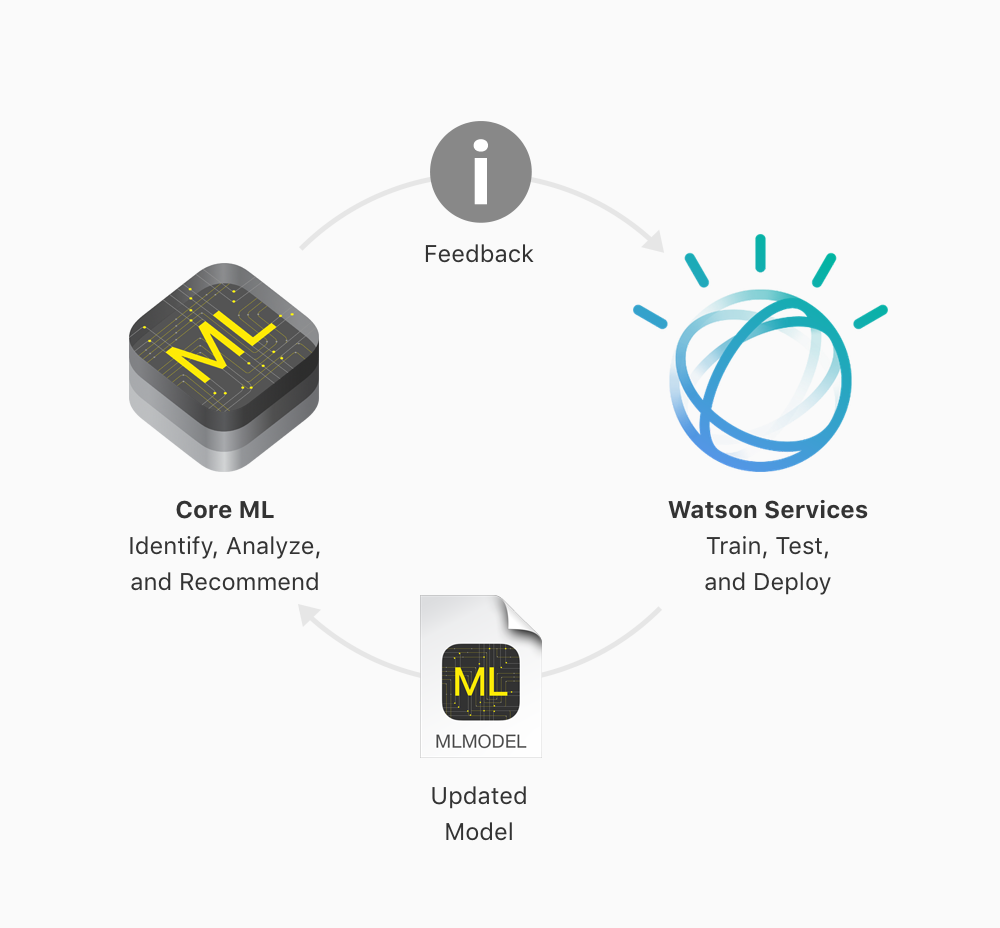

Integrating Watson Service with iOS is actually really straightforward. Developers will first have to build a model with Watson and then the model can be converted into a model that Apple’s Core ML can understand. Then the custom app will then be able to use that model natively.

Core ML was first introduced last year at the Worldwide Developers Conference. The framework facilitates the integration of machine learning models that are built using third-party tools into an iOS app. Core ML is one step in Apple’s big push into machine learning, which began in iOS 11 running on the A11 Bionic chip on the latest iPhones.