Here’s A Mood Analysis Of Tim Cook’s Speech From Last Month’s Keynote [VIDEO]

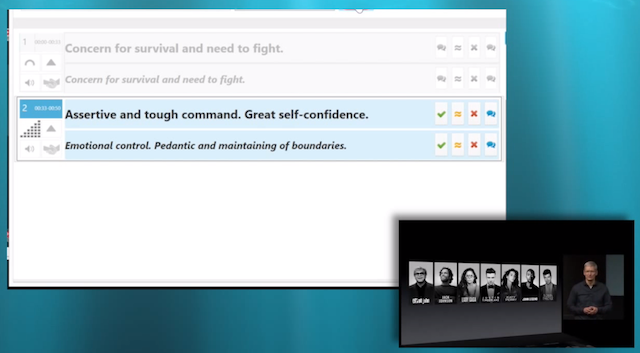

Philip Elmer-Dewitt, the editor of Fortune Apple 2.0, has used Beyond Verbal’s Moodies mood recognition app to analyze the mood of Apple CEO Tim Cook during his keynote speech at the iPhone 5S launch event last month. The results he says are very different when the same “emotion detection engine” is applied to the sound of Steve Jobs voice during an Apple event keynote.

According to the app, analysis of the first two minutes of Tim Cook’s iPhone keynote speech show feelings of “Emotional control, pedantic and maintaining of boundaries”. At the same time, analysis of a similar Steve Jobs’s video shows “Loneliness, fatigue, emotional frustration”. Like the author says, I’m still skeptical about the results, especially about the idea floated in a Beyond Verbal promotional video, that “someday men will use their mobile apps to finally figure out what women want”.

I didn’t see how something like that could be done without a fair bit of time-consuming field work and machine learning. In my mind I placed it in the hierarchy of personality analysis somewhere between Astrology and Myers Briggs.

That was before I chatted with the folks at Beyond Verbal and played around with the Moodies app on their home page. The company has done a fair bit of field work and machine learning, processing recordings and questionnaires from more than 70,000 volunteers over the past 18 years.

Well, what do you think guys?