Apple Introduces New ‘FaceTime Attention Correction’ Feature in iOS 13

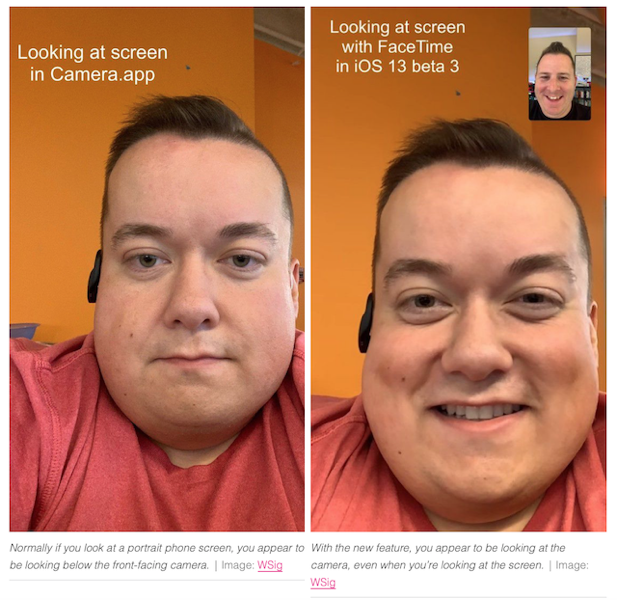

Normally during a FaceTime call, it appears that the participants are looking at one side or the other since they are looking at each other’s face on the display instead of looking directly at their front-facing cameras.

With a new feature in iOS 13, Apple has resolved this issue that makes it look like the FaceTime caller is staring directly at the camera, even when looking away at the person on the screen, The Verge is reporting.

Highlighted first on Twitter by Mike Rundle, the feature is called “FaceTime Attention Correction”. It is available in iOS 13’s third developer beta and can be toggled on and off in FaceTime settings. At the moment, the feature seems to be only working on the iPhone XS and iPhone XS Max and iPhone XR.

The feature appears to use some kind of image manipulation to correct this, and results in realistic-looking fake eye contact between the FaceTime users. Coincidentally, Rundle himself theorized back in 2017that Apple would one day do this, although not so soon.

On Twitter, Dave Schukin explains that the effect is being achieved using ARKit, which is used to map a user’s face and adjust the positioning of their eyes accordingly.

It is not yet known whether Apple will enable the feature for other iPhone models as well or not in the final iOS 13 release.

Want to see more of our stories on Google?

P.S. Want to keep this site truly independent? Support us by buying us a beer, treating us to a coffee, or shopping through Amazon here. Links in this post are affiliate links, so we earn a tiny commission at no charge to you. Thanks for supporting independent Canadian media!