Google’s “Show and Tell” Algorithm Captions Images with 94% Accuracy

Google has released its “Show and Tell” image captioning algorithm to developers, which can recognize objects in photos with up to 93.9% accuracy, a significant improvement from two years ago when it could identify 89.6% of images correctly (via Engadget). Accurate photo descriptions prove quite useful to historians, visually impaired folks and AI researchers, to name a few.

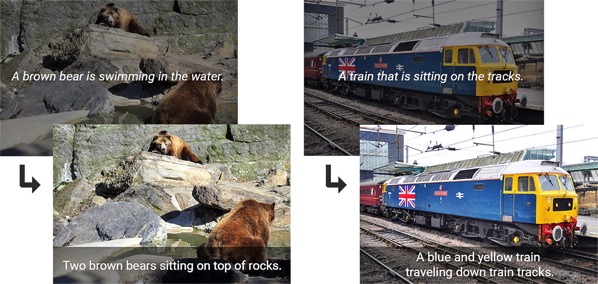

Google’s open-source code release uses its third-gen “Inception” model and a new vision system that’s better at picking out individual objects in a shot. The researchers also fine-tuned it for better accuracy. “For example, an image classification model will tell you that a dog, grass and a frisbee are in the image, but a natural description should also tell you the color of the grass and how the dog relates to the frisbee,” the team wrote.

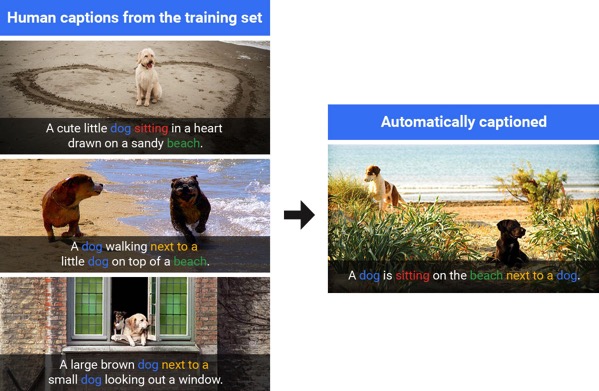

Google’s system, which was trained using human captions, is now able to describe images it hasn’t seen before. Using several photos of dogs on beach for instance, it was able to generate a caption for a similar, but slightly different scene (see below).

Google has released the source code on its TensorFlow system and is available to all developers for free.

Want to see more of our stories on Google?

P.S. Want to keep this site truly independent? Support us by buying us a beer, treating us to a coffee, or shopping through Amazon here. Links in this post are affiliate links, so we earn a tiny commission at no charge to you. Thanks for supporting independent Canadian media!