Samsung Scored 18 Stars While Apple Did 22 In J.D. Power Survey, Yet It Beat Apple? [u]

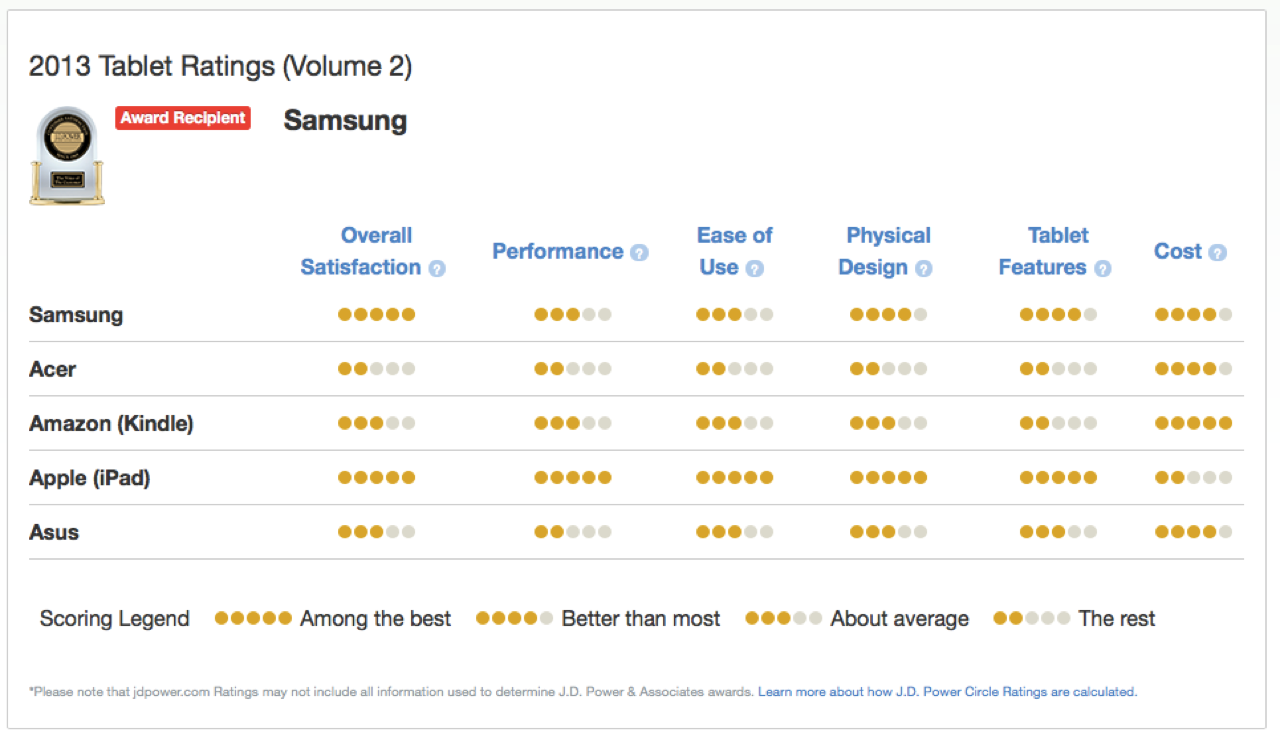

Yesterday when J.D. Power released the numbers for its latest U.S. tablet satisfaction study, claiming that Samsung ranks highest in owner satisfaction with tablet devices in the United States, majority of editors and reporters, including myself, highlighted Samsung’s least expected victory over Apple in their breaking news articles. However, some skeptical reporters like Fortune’s Philip Elmer-Dewitt found out that J.D. Power appears to have seriously messed up its survey results, as Apple took home 22 gold stars and Samsung took home 18 (see chart above), and yet it rated the later higher than Apple in “overall satisfaction”.

The survey highlights that Samsung ranks No. 1 with a score of 835, and is the only manufacturer to improve across all five factors since the previous reporting period in April 2013. But in reality, Apple did better than Samsung in four out of five of those categories, scoring the maximum five stars in performance, ease of use, physical design and tablet features.

“The only category that Samsung beat Apple in was (duh) cost. And cost, according to Power’s press release, counts for at most 16% of the total score.

Bottom line: Apple took home 22 gold stars. Samsung took home 18. And then, for reasons known only to itself, J.D. Power and Associates put out a press release under the headline: Samsung Ranks Highest in Owner Satisfaction with Tablet Devices.” I’m sure we’ll hear an explanation from J.D. Power pretty soon and once we do, we’ll update you right away. Stay tuned!

[UPDATE] J.D. Power issues an explanation:

As expected, J.D. Power’s senior director of telecommunications services Kirk Parsons, has responded to Philip Elmer-Dewitt’srequest to explain the puzzling numbers of its tablet survey, who said, “It’s very simple, it’s just math.”

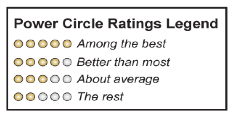

Turns out the only figures that count “as far as J.D. Power is concerned”, are the ones illustrated in its press release suggesting Samsung edged Apple by two points, 835 to 833. But the problem comes in once it uses “Power Circles” to communicate the results to consumers.

“Those gold circles are derived from same tablet survey, but they don’t reflect the original 0 to 1,000 scale. Rather, they show where each company’s products stand relative to its competitors — “among the best,” “about average,” etc. This system has worked pretty well over the years. But from time to time, signals get crossed: the Power Circles say one thing and the overall rating says another, as they do in the case of Apple’s iPads and Samsung’s tablets. How can that happen? Parsons gives an example, stressing that these are not the real numbers:

Say Apple in four of the categories had a 10 point lead over Samsung — for a total of 40 points. But if Samsung had a big enough lead in that fifth category — say 50 points — that might be enough to push it over the top.”

As Elmer-Dewitt puts it so eloquently, “if cost is the criterion by which Samsung edged out Apple — trumping such factors as ease of use and performance — is “satisfied” really the best way to describe how those 3,375 survey participants feel about their tablets?”