Apple Drops its Plans to Scan User Photos for CSAM

In response to the feedback and guidance it received, Apple has announced that its child sexual abuse material (CSAM) detection tool for iCloud photos has now been permanently dropped, Wired is reporting.

Apple has been delaying its CSAM detection features amid widespread criticism from privacy and security researchers and digital rights groups who were concerned that the surveillance capability itself could be abused to undermine the privacy and security of iCloud users.

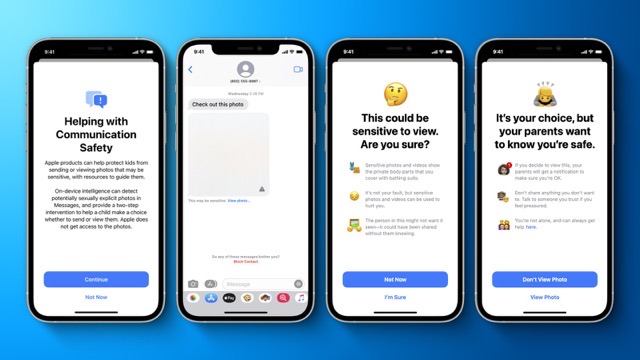

Now, the company is focusing its anti-CSAM efforts and investments on its “Communication Safety” features, which the company announced in August 2021 and launched last December.

Parents and caregivers can opt into the protections through family iCloud accounts. The features work in Siri, Apple’s Spotlight search, and Safari Search to warn if someone is looking at or searching for CSAM and provide resources on the spot to report the content and seek help.

“After extensive consultation with experts to gather feedback on child protection initiatives we proposed last year, we are deepening our investment in the Communication Safety feature that we first made available in December 2021,” Apple said in a statement.

“We have further decided to not move forward with our previously proposed CSAM detection tool for iCloud Photos. Children can be protected without companies combing through personal data, and we will continue working with governments, child advocates, and other companies to help protect young people, preserve their right to privacy, and make the internet a safer place for children and for us all.”

Apple’s CSAM update comes alongside its announcement today that the company is expanding its end-to-end encryption offerings for iCloud, including adding the protection for backups and photos stored on the cloud service.

Want to see more of our stories on Google?

P.S. Want to keep this site truly independent? Support us by buying us a beer, treating us to a coffee, or shopping through Amazon here. Links in this post are affiliate links, so we earn a tiny commission at no charge to you. Thanks for supporting independent Canadian media!

The pedos and groomers score a win.

You must be real happy then its_me. Afterall Apple still has their Nudity detection software and data on every iOS device moving forward. So what’s to stop Apple from enabling it to run on any iOS device they want to check out? Oh, we already know that Apple said that their Nudity detection software is only for parents to enable on their kids iOS devices. But we also know that Apple ignored their own customers privacy settings, and still collected their own customers private data without their customers permission.

Oh pedo. You sound upset.

I am not upset, I am only telling the truth.

So long time no see from the Apple groomer himself. How’s it going, do you still give out those funny tasting candy to kids? Are you using Apple’s Nudity detection on the people you love? Or just on those you would like to love?

Too far

Yet its okay for its_me to call others pedo? Yet Leon you mention nothing about that going too far, why is that Leon? So I guess this Leon character is just another alternate of its_me pile of accounts.

You misunderstood, I am not defending It’s Me. He’s a grown up and can defend himself. I objected to the mud slinging, no matter from whom it’s coming. To be fair, you are the one who implied he has sympathy for pedos first when you said that he must be happy about pedos and groomers scoring a win. Regarding me not mentioning the he has gone too far, there are few occasions here when I used these exact words to address what he’s posted and proceeded to debate him at length. Unless you are implying that he suffers from multiple personalities disorder and argues with himself, there are numerous threads where we were in strong disagreement; so suggesting that I am not a real person but one of his alternate accounts is borderline paranoid ideation.

Mud slinging I normally don’t do to anyone else, period. See we have a long history where its_me constanly lies, and has gone out of his way to call others names, and even slander others. But when it comes to that liar named its_me. All that clown ever does, is mud sling, and stur up crap.

The first post on this article was from its_me’s “The pedos and groomers score a win”.

But you still come at me, and not its_me. Saying that its_me can defend himself, but you still go after me for mud slinging. Why don’t you say a thing to its_me then? Therefore I still stand that you are one of its_me other accounts, like this Leon account. BTW I have a pretty good feeling that this its_me account is actually one of the people that runs this website.

Well, this is disturbing…

Of course Apple is dropping their CSAM scanning, especially since Apple has now embedded their Nudity detection software into every iPhone and iPad running iOS 15 and above.

Apple has said that their Nudity detection is only for those wanting to enable it on their childrens iOS devices, but since all that software, and data is now installed every iOS device, then you have to ask yourself, what’s to stop Apple from enabling it for any customer they want to check on? We already know that Apple ignores their customers privacy setting, and will still collect their customers private data.

No wonder why Apple is being taken to the Federal Court in the USA