New ‘Acoustic Attack’ Found to Steal Data Using Keystroke Sounds

A team of British researchers has developed a sophisticated deep learning model capable of stealing user data from keyboard keystrokes, all by harnessing sound waves (via Bleeping Computer).

Researchers says they have uncovered an unsettling method that exploits the audio produced by keystrokes, with an accuracy rate of an astonishing 95%.

While examining the intricacies of this new ‘accosting attack,’ the researchers stumbled upon a particularly unnerving revelation. Even when utilizing popular video conferencing tool Zoom for training their sound classification algorithm, the prediction accuracy only dipped slightly to 93%.

This remarkable result signifies a potentially catastrophic breach of security, setting a new record for accuracy within this medium.

The implications of such an attack are severe, as it threatens to compromise an individual’s data security on multiple fronts.

From the leakage of sensitive discussions and messages to the exposure of passwords and private information, this technique opens the door for malicious third parties to exploit an individual’s most personal details.

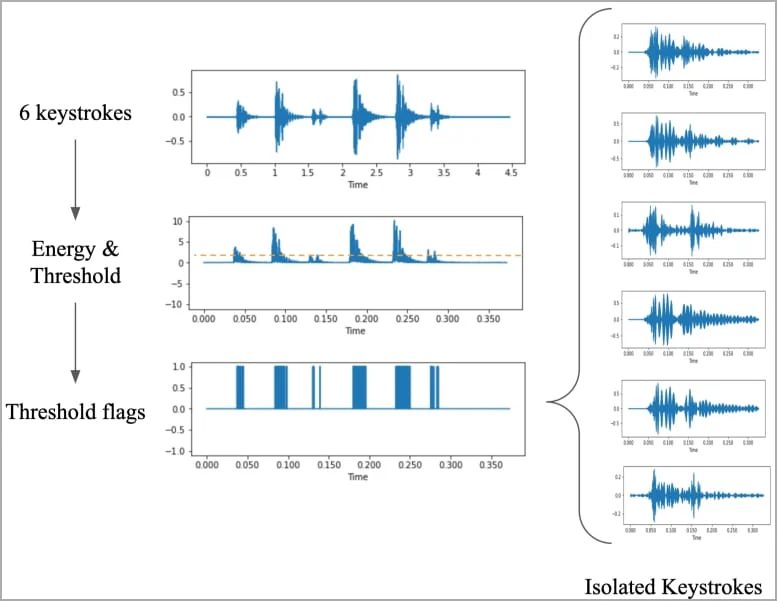

The attack itself is a multi-step process. It begins by recording the audio produced by keyboard keystrokes, a crucial dataset for training the prediction algorithm.

This can be achieved using a nearby microphone or even through the microphone on the target’s phone, which may be compromised by malware. Another avenue is through a Zoom call, where a rogue participant leverages the audio correlation between typed messages and sound recordings.

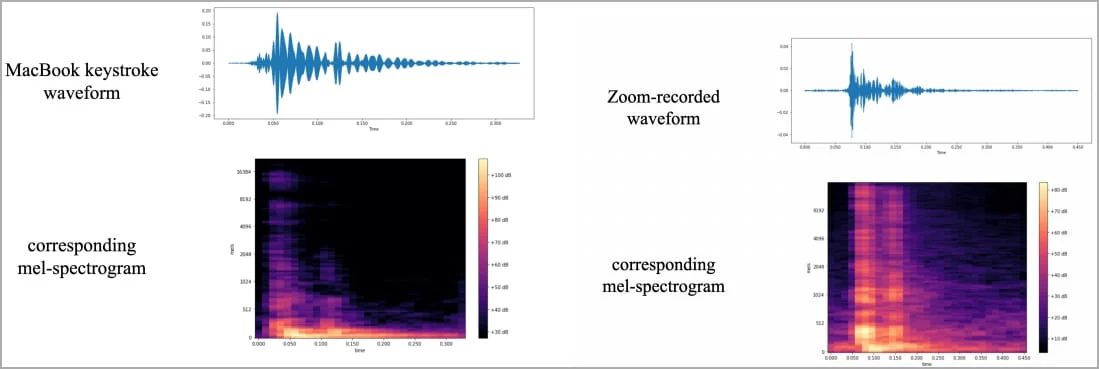

The researchers meticulously gathered their training data, pressing 36 keys on a modern MacBook Pro 25 times each while capturing the resulting sounds.

They then transformed these recordings into waveforms and spectrograms, allowing them to identify distinctive differences for each key. Augmented signals from this process became the foundation for identifying keystrokes.

The result of their labor is ‘CoAtNet,’ an image classifier trained using spectrogram images. The researchers meticulously fine-tuned parameters such as epoch, learning rate, and data splitting to achieve peak prediction accuracy.

The CoAtNet classifier exhibited a staggering 95% accuracy when analyzing smartphone-recorded sounds and an alarming 93% accuracy with audio captured via Zoom. Even Skype, though slightly less effective, still managed a formidable 91.7% accuracy.

The paper suggests potential defense strategies, such as altering typing styles, employing randomized passwords, and incorporating software-based keystroke audio filters or white noise.

Want to see more of our stories on Google?

P.S. Want to keep this site truly independent? Support us by buying us a beer, treating us to a coffee, or shopping through Amazon here. Links in this post are affiliate links, so we earn a tiny commission at no charge to you. Thanks for supporting independent Canadian media!