Apple Child Safety Scanning Feature Raises Concerns Internally

Apple’s new “Expanded Protections for Children” scanning feature, which was first revealed last week, has stirred up the concern of many individuals and third-party organizations. However, it appears as though concerns are also being raised internally.

Apple employees have begun to take to an internal Slack channel to make their voices heard and express their concerns over the company’s new child protection scanning feature. As reported by Reuters, sources have come forward and stated that over 800 messages were published on the channel.

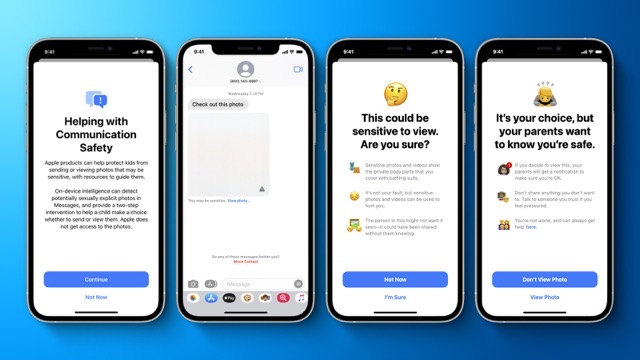

Apple’s Expanded Protections for Children includes a feature that scans through iCloud photos for Child Sexual Abuse Material (CSAM) and utilizes a Communication Safety feature for iMessage that warns children and parents when they may have received sexually suggestive material. Employees of the company have expressed their belief that this technology could be exploited by repressive governments and could lead to heavy censorship and arrests.

Apple has been facing pushback of this feature since it was revealed to be in the works for US users. Earlier this week, more than 5000 signatures were amassed asking Apple to halt its plans to roll out the feature. Signatures came from individuals as well as organizations.

WhatsApp has also stepped in and expressed its concern over the matter. Will Cathcart, head of WhatsApp went on record to say, “This is an Apple-built and operated surveillance system that could very easily be used to scan private content for anything they or a government decides it wants to control. It’s troubling to see them act without engaging experts.”

The Apple Slack channel gained perspectives from both sides. Some employees also believed Slack wasn’t the most suitable forum for such discussion. Others hoped that this would be a step towards fully encrypting iCloud for users who wished to have it.

Want to see more of our stories on Google?

P.S. Want to keep this site truly independent? Support us by buying us a beer, treating us to a coffee, or shopping through Amazon here. Links in this post are affiliate links, so we earn a tiny commission at no charge to you. Thanks for supporting independent Canadian media!