Apple Needs to Drop CSAM Detection Plans Entirely, Says Digital Rights Group

On Friday, Apple announced it would delay its new child safety and Child Sexual Abuse Material (CSAM) detection features, taking “additional time over the coming months to collect input and make improvements.”

International digital rights group Electronic Frontier Foundation, in response to the announcement, said that delaying what basically amounts to a backdoor in Apple’s encryption isn’t enough — the tech giant must abandon its surveillance plans altogether and uphold its extensively marketed promises of real user privacy.

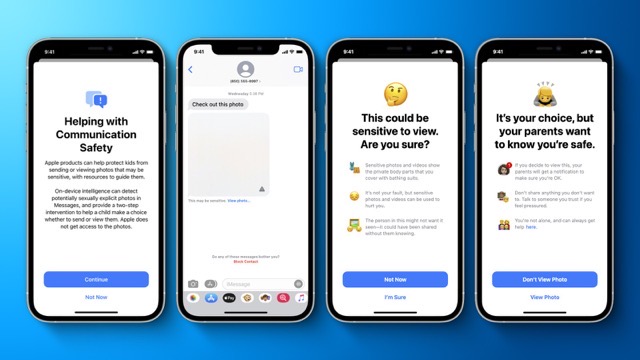

In August, Apple unveiled plans for new child safety features that would be implemented across its ecosystem, scanning (and flagging) minors’ iMessage conversations for nudity, and users’ devices for media classified as CSAM.

The CSAM detection system led to an immediate uproar from Apple’s user base, much of which the iPhone maker has cultivated by being a purveyor of airtight encryption and increased user privacy. The community was not happy, and Apple’s attempts at addressing security concerns and insisting that the new features won’t infringe on user privacy went in vain.

Digital rights organizations like OpenMedia started petitioning Apple to abandon the CSAM detection system, and garnered significant support in their cause. 90+ activist groups from all over the world even banded together to and rose up in opposition last month.

Apple’s CSAM detection system would have launched as an additional feature of iOS 15, iPadOS 15 and macOS Monterey later this year.

The Electronic Frontier Foundation argues that Apple’s CSAM detection system is a double-edged sword that could be reverse-engineered and exploited by bad actors, corrupted and misused by overzealous governments, and even prove dangerous to minors living in abusive or otherwise oppressive households, and as such should be dropped altogether — not just delayed.