Apple Details Face Detection in Latest Machine Learning Journal Entry

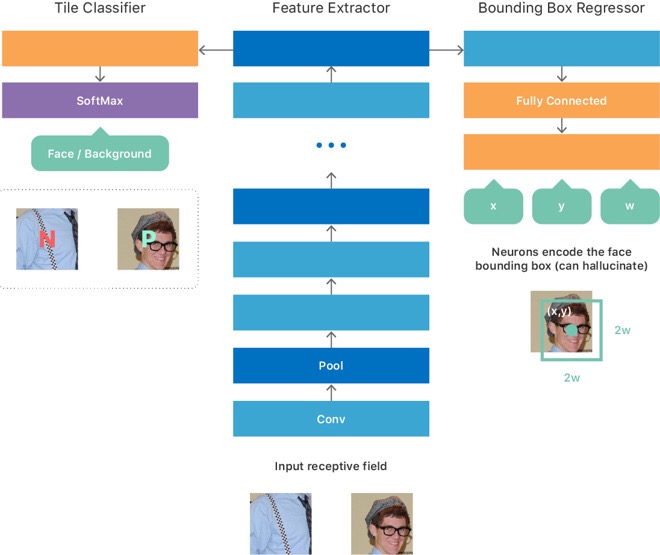

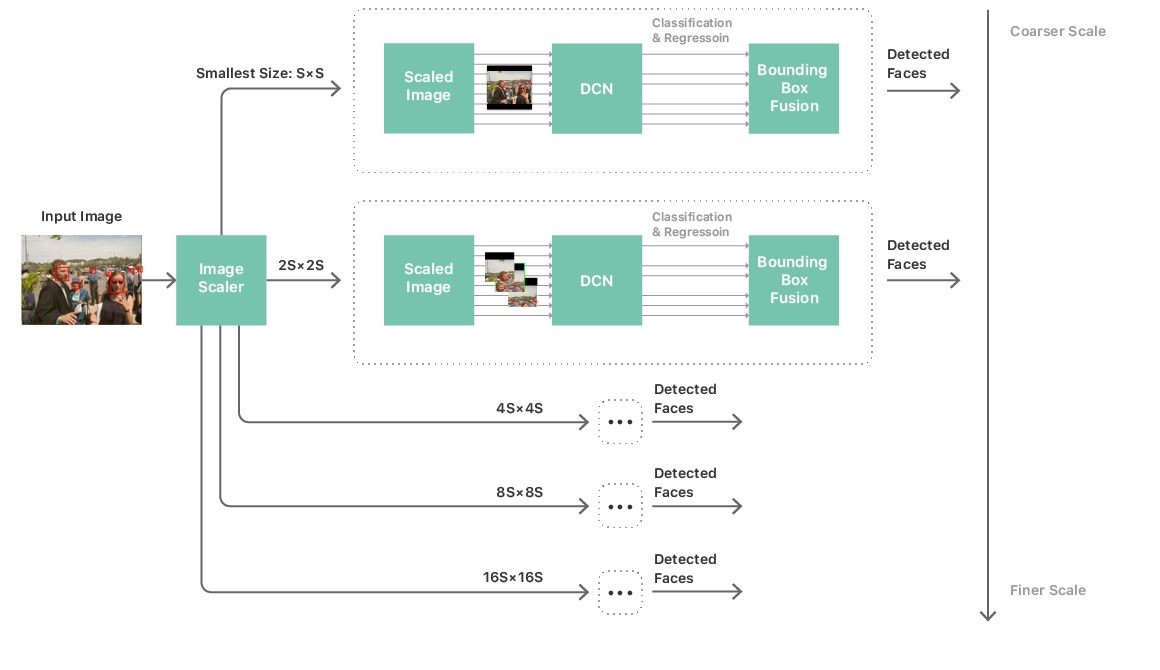

Apple’s latest entry in its online Machine Learning Journal titled “An On-device Deep Neural Network for Face Detection” details how face detection works as well as the related Vision framework, which developers can use for apps on iOS and Mac.

Apple says it has optimized the framework to fully leverage CPUs and GPUs, using Apple’s Metal graphics and BNNS (basic neural network subroutines).

“The deep-learning models need to be shipped as part of the operating system, taking up valuable NAND storage space. They also need to be loaded into RAM and require significant computational time on the GPU and/or CPU. Unlike cloud-based services, whose resources can be dedicated solely to a vision problem, on-device computation must take place while sharing these system resources with other running applications. Finally, the computation must be efficient enough to process a large Photos library in a reasonably short amount of time, but without significant power usage or thermal increase.”

Apple explains how it leveraged an approach, informally called “teacher-student” training. “This approach provided us a mechanism to train a second thin-and-deep network (the “student”), in such a way that it matched very closely the outputs of the big complex network (the “teacher”) that we had trained as described previously”.

Apple finally developed an algorithm for a deep neural network for face detection that was feasible for on-device execution. The company has been investing deeply in machine learning, even implementing a dedicated “Neural Engine” for the A11 Bionic processor in the iPhone X and iPhone 8.

You can read the entire journal at this link.

Want to see more of our stories on Google?

P.S. Want to keep this site truly independent? Support us by buying us a beer, treating us to a coffee, or shopping through Amazon here. Links in this post are affiliate links, so we earn a tiny commission at no charge to you. Thanks for supporting independent Canadian media!