ChatGPT Desktop Coming; New GPT-4o Impresses in Demo [VIDEO]

OpenAI CEO Sam Altman and the company’s team unveiled significant updates to ChatGPT and introduced GPT-4o, the latest model in the GPT series, during a presentation on Monday.

The new model, which boasts being smarter, faster, and natively multimodal, will be available to all ChatGPT users, including those on the free plan. Previously, GPT-4 class models were exclusive to subscription-based users.

The macOS ChatGPT app is coming to Plus users starting today, while OpenAI plans to launch a Windows version “later this year.”

“Today, GPT-4o is much better than any existing model at understanding and discussing the images you share. For example, you can now take a picture of a menu in a different language and talk to GPT-4o to translate it, learn about the food’s history and significance, and get recommendations,” explained OpenAI.

“In the future, improvements will allow for more natural, real-time voice conversation and the ability to converse with ChatGPT via real-time video. For example, you could show ChatGPT a live sports game and ask it to explain the rules to you. We plan to launch a new Voice Mode with these new capabilities in an alpha in the coming weeks, with early access for Plus users as we roll out more broadly,” said OpenAI. That is going to be so cool.

GPT-4o is designed to excel particularly in coding tasks and will be offered at half the cost and double the speed of its predecessor, GPT-4-turbo, in the API. Additionally, it will feature a fivefold increase in rate limits, making it more accessible and efficient for developers.

Here’s a demo of the new GPT-4o talking to GPT (now that’s some inception right there):

Introducing GPT-4o, our new model which can reason across text, audio, and video in real time.

It's extremely versatile, fun to play with, and is a step towards a much more natural form of human-computer interaction (and even human-computer-computer interaction): pic.twitter.com/VLG7TJ1JQx

— Greg Brockman (@gdb) May 13, 2024

Altman highlighted the model’s real-time voice and video capabilities, which he described as exceptionally natural. “It’s hard to get across by just tweeting,” Altman noted, indicating that these features would be rolled out in the coming weeks.

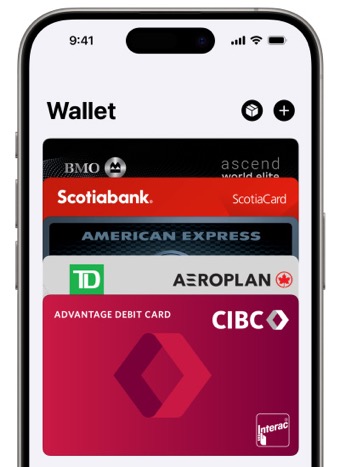

Demonstrations included the use of an iPhone’s rear camera to show ChatGPT visual inputs, enabling interactions where users can speak directly to ChatGPT and even interrupt the chatbot. The natural conversation in voice mode is unreal. You can now interrupt the model at anytime and there’s now a real-time response from ChatGPT. The model can now pick up on your emotion. This was shown when it could hear breathing in a demo. Also, the model has a wide variety of styles and ranges when talking back to you.

Further showcasing the model’s capabilities, a live demo featured ChatGPT acting as a real-time translator between Italian and English. Definitely impressive stuff.

These updates are part of OpenAI’s mission to democratize access to advanced AI tools it says. Check out Mira Murati, OpenAI’s CTO, explain and reveal GPT-4o below and give a demo of the new update, which was also super impressive in seeing how ChatGPT can solve a math problem using video:

OpenAI said they now have over 100 million people using ChatGPT.

Man, if only Siri can be as smart as ChatGPT. Maybe a deal is forthcoming at WWDC as rumoured?

Want to see more of our stories on Google?

P.S. Want to keep this site truly independent? Support us by buying us a beer, treating us to a coffee, or shopping through Amazon here. Links in this post are affiliate links, so we earn a tiny commission at no charge to you. Thanks for supporting independent Canadian media!