Apple Music Does Not Match Files Using Acoustic Fingerprinting

Unlike iTunes Match, which matches music files using acoustic fingerprinting, which means that iTunes scans the music and matches it to the same music, Apple Music matches files with metadata only, as pointed out by Kirk McElhearn. The benefit of acoustic fingerprinting is that whatever tags the files have e.g. a Grateful Dead song labeled as a song by 50 Cent, iTunes Match would still match the Grateful Dead song correctly. Apple Music however, does not work like that.

McElhearn details that Apple Music does not use the acoustic fingerprinting technique, but simply uses the tags your files contain, which can lead to errors. And since it matches only using tags, Apple Music can’t tell the difference between a studio recording and a live version of a song, or an explicit version and a clean version.

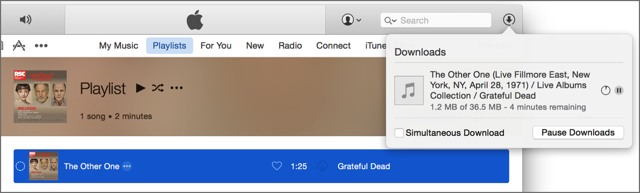

Here’s an example. I took a short speech from a Royal Shakespeare Company CD of excerpts from their current production of The Merchant of Venice. I labeled it “The Other One” by the Grateful Dead. It matched, I deleted the local file, and downloaded this live track from April 1971, which was released on the Skull & Roses album.

Granted, the track that Apple Music gave me is a great version of the song, but at 18:04, it’s far from the 1:25 original track.

Since Apple already has the technology to match tracks using acoustic fingerprinting, why is it not using it with Apple Music?