How ‘Face ID’ Camera on iPhone 8 Will Work According to KGI [PIC]

Update Sept. 9: the OLED model will be called iPhone X, according to iOS 11, as leaked this morning. So keep that in mind when you’re reading this post, which was published last night (in other words, replace iPhone 8 with iPhone X).

With iOS 11 GM leaked, more details have been revealed about Apple’s upcoming OLED iPhone 8, Apple Watch Series 3 and new AirPods.

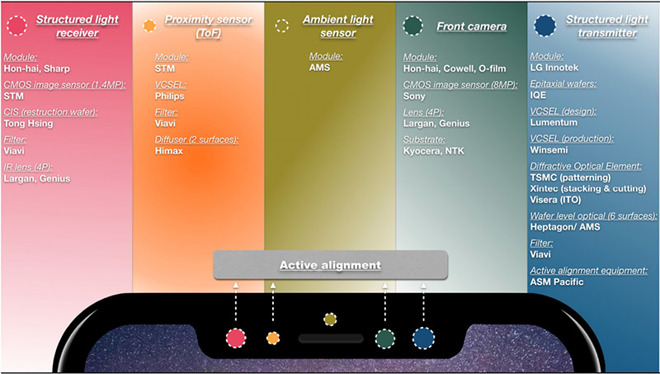

Now, KGI Securities analyst Ming-Chi Kuo has released a note to investors, detailing how the ‘Face ID’ camera within iPhone 8 will work. In the iPhone 8 ‘notch’, there will be a structured light transmitter (new), structured light receiver (new), proximity sensor, ambient light sensor and front camera.

Here’s how Kuo explains the different components working together (via 9to5Mac):

Structured light used to collect depth information, integrating with 2D image data from front camera to build the complete 3D image.

Given the distance constraints of the structured light transmitter and receiver (we estimate 50-100cm), a proximity sensor (with ToF function) is needed to remind users to adjust their iPhone to the most optimal distance for 3D sensing.

The new structured light transmitter has six components, explains Kuo: active alignment equipment, filter, wafer level optical, diffractive optical element, VCSEL, and a epitaxial wafer.

As for the structured light receiver, it has four components: an IR lens, filter, CIS, and CMOS image sensor (1.4MP).

Below is a graphic from Kuo obtained by AppleInsider, showing all the different suppliers for Face ID:

Kuo says there may be 3D sensing component supply issues, which is why many are noting iPhone 8 will be in short supply and ship later this fall.

The analyst also says iPhone 8 will come in white, black and gold rear casings, while the front glass on all models will be—black (yes!) to conceal the sensors versus a white front.

Get your credit cards ready, folks. iPhone 8 pre-orders await!

Want to see more of our stories on Google?

P.S. Want to keep this site truly independent? Support us by buying us a beer, treating us to a coffee, or shopping through Amazon here. Links in this post are affiliate links, so we earn a tiny commission at no charge to you. Thanks for supporting independent Canadian media!

Black front with white back is my fav colour combo for 5c. I’ll be getting that while everyone’s after black on black.

iPhone X. iPhone 8 probably won’t have this.

This post was published prior to the leak of ‘iPhone X’, just FYI 🙂

Yup.

Gary, you ready for preorder ? I have already set up my alarm at 3 am EST for Sep 22 lol!

Yes! But you may want to set for Sept. 15th as well in case 🙂