Instagram Adds New Features Aimed at Protecting Younger Users

Instagram is attempting to make its photo- and video-sharing social network a safer place for younger users.

Instagram on Tuesday said it’s introducing new features aimed at protecting young people on the photo-sharing app, including prompts about “potentially suspicious” direct messages and restrictions on messages between teens and adults they don’t follow.

“These updates are a part of our ongoing efforts to protect young people — and our specialist teams continue to invest in new interventions that further limit inappropriate interactions between adults and teens,” reads the Instagram blog post.

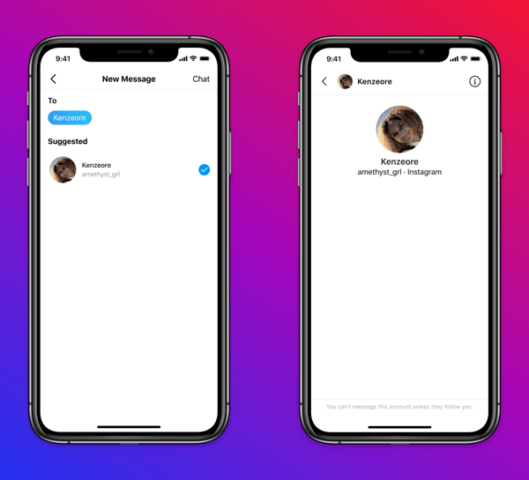

The service is launching a string of preventative measures to keep teens protected, most notably restrictions on direct messages. Adults now can’t message under-18s who don’t follow them. The restriction works though a combination of the user’s claimed age when they sign up as well as machine learning that predicts people’s ages.

Teens themselves will also get safety notices in their DMs if an adult they’ve been messaging has been sending a lot of friend and message requests to people under 18. Get one and the user will have options to cut the conversation short, block the adult outright, report them, or impose restrictions.

It’ll also be more difficult for sketchy adults to find those teens in the first place. Instagram will soon begin exploring ways to prevent adults with potentially dodgy behavior from interacting with teens, such as removing teen accounts from suggestions, Reels, and Explore. The social media giant might automatically hide these adults’ comments on public posts, too.

The platform will also encourage young people to keep their accounts private. Teens can still opt for a public account if they choose to do so after learning more about the options. Instagram will send notifications if the teen doesn’t choose “private” when signing up. In these notifications, it will highlight the benefits of a private account.

Want to see more of our stories on Google?

P.S. Want to keep this site truly independent? Support us by buying us a beer, treating us to a coffee, or shopping through Amazon here. Links in this post are affiliate links, so we earn a tiny commission at no charge to you. Thanks for supporting independent Canadian media!