EU Proposes New Child Abuse Content Detection in Chat Apps

According to The Verge, the European Commission has proposed new rules that would require messaging apps and other private platforms to scan users’ encrypted messages for child sexual abuse material (CSAM) and communication that constitutes “grooming” a minor or the “solicitation of children.”

A draft of the proposal, leaked earlier this week, was poorly received by privacy experts. Critics said the EU’s plans are even more invasive than the CSAM detection system Apple proposed and almost implemented last year, but ultimately delayed indefinitely after apps like WhatsApp and more than 90 activist groups all pushed back against it.

“This document is the most terrifying thing I’ve ever seen,” cryptography professor Matthew Green said in a tweet. “It describes the most sophisticated mass surveillance machinery ever deployed outside of China and the USSR. Not an exaggeration.”

This document is the most terrifying thing I’ve ever seen. It is proposing a new mass surveillance system that will read private text messages, not to detect CSAM, but to detect “grooming”. Read for yourself. pic.twitter.com/iYkRccq9ZP

— Matthew Green (@matthew_d_green) May 10, 2022

“This looks like a shameful general #surveillance law entirely unfitting for any free democracy,” said Jen Penfrat, a member of the digital advocacy group European Digital Rights (EDRi).

If passed, the regulation will call for “online service providers,” which include app stores, hosting companies, and “interpersonal communications service” providers like messaging apps, to scan the private messages of specific users for whom they get a “detection order” from the EU for CSAM and behaviours of “grooming” or the “solicitation of children.”

What’s more, machine vision tools and AI systems will be used to identify texts and images that amount to the latter two categories. These technologies aren’t one hundred percent accurate one hundred percent of the time.

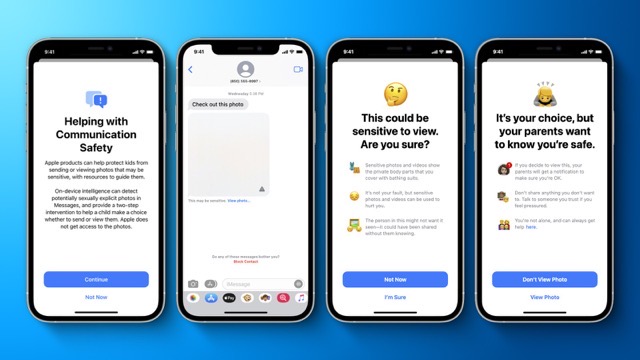

Apple’s proposal, in comparison, sought to autonomously scan users’ messages and iCloud Photos for known examples of CSAM. Apple admitted in August 2021 that it has been scanning iCloud Mail for CSAM since 2019.

“Detection orders” would be issued by individual EU nations. While the Commission claims that these orders would be “targeted and specified,” not much is known about exactly how they will work or what parameters will be used to target users.

Curated targeting carries the risk of marginalizing individuals and groups. On the other hand, targeting large bands of people at once can be highly invasive. What’s more, the proposed rules also stand to threaten the efficacy of end-to-end encryption by introducing foreign, unrelated software into the encryption pipeline.

“There’s no way to do what the EU proposal seeks to do, other than for governments to read and scan user messages on a massive scale,” Joe Mullin, a senior policy analyst at the digital rights group Electronic Frontier Foundation (EFF), told CNBC. “If it becomes law, the proposal would be a disaster for user privacy not just in the EU but throughout the world.”

Want to see more of our stories on Google?

P.S. Want to keep this site truly independent? Support us by buying us a beer, treating us to a coffee, or shopping through Amazon here. Links in this post are affiliate links, so we earn a tiny commission at no charge to you. Thanks for supporting independent Canadian media!

The EU will screw up the Internet, again.

Well considering Apple already has their Nudity detection software on all iOS 15 devices. Which can already scan for any and all nude images, including scanning for any Nudity in any message apps as well. This Nudity detection software is already installed on every iPhone running the latest version of iOS 15. So it should take very little effort on Apple’s part to now scan for any CSAM content, or images.

Also lets not forget that Apple wanted to install their CSAM detection software onto every iOS device last year, before the EU got involved, but Apple had to back away from the backlash from their own followers. Apple then put their Nudity Detection software onto every iOS instead, under the guise that this Nudity detection software will only be used by parents, and enabled on their kids iPhones. But that Nudity detection software is on every iOS device moving forward. Whats to stop Apple from enabling this Nudity detection software on iPhones that are not kids iPhones? Nothing! Especially since it wouldn’t take much effort for Apple to enable their Nudity Detection software on any iPhone customer they want. Plus all that software is already on the latest iOS versions. Not to mention Apple could release an iOS update, and that iOS update could include other ML models and data. Models and data that could also look for guns, weapons, bombs, drugs, and more. Apple could even scan for people of interest.

Still waiting for that one Apple missionary to defend the fruit companies honour. What’s its name again? Lol.

Where is all those Apple privacy and security zealots again? It seems to me they don’t want to come out and talk about privacy or security when it comes to this stuff. I guess that is because there really is no real privacy or security with Apple anymore.