Facebook Announces Plan to Crackdown on Fake News

Following accusations for spreading fake news, Facebook has been busy addressing this major problem. Apparently, the social media giant has found a way to reduce the avalanche of hoax news showing up in our news feed by changing some ad policies and by using external sources — alongside easing reporting — and, as such, also relying on its 1.8 billion user base (via the New York Times).

Facebook doesn’t want to become an arbiter of truth and believes in giving people a voice, hence the careful approach. The update the social media giant has shared today in regards to this matter is just one of the steps it is taking to limit fake news on its platform.

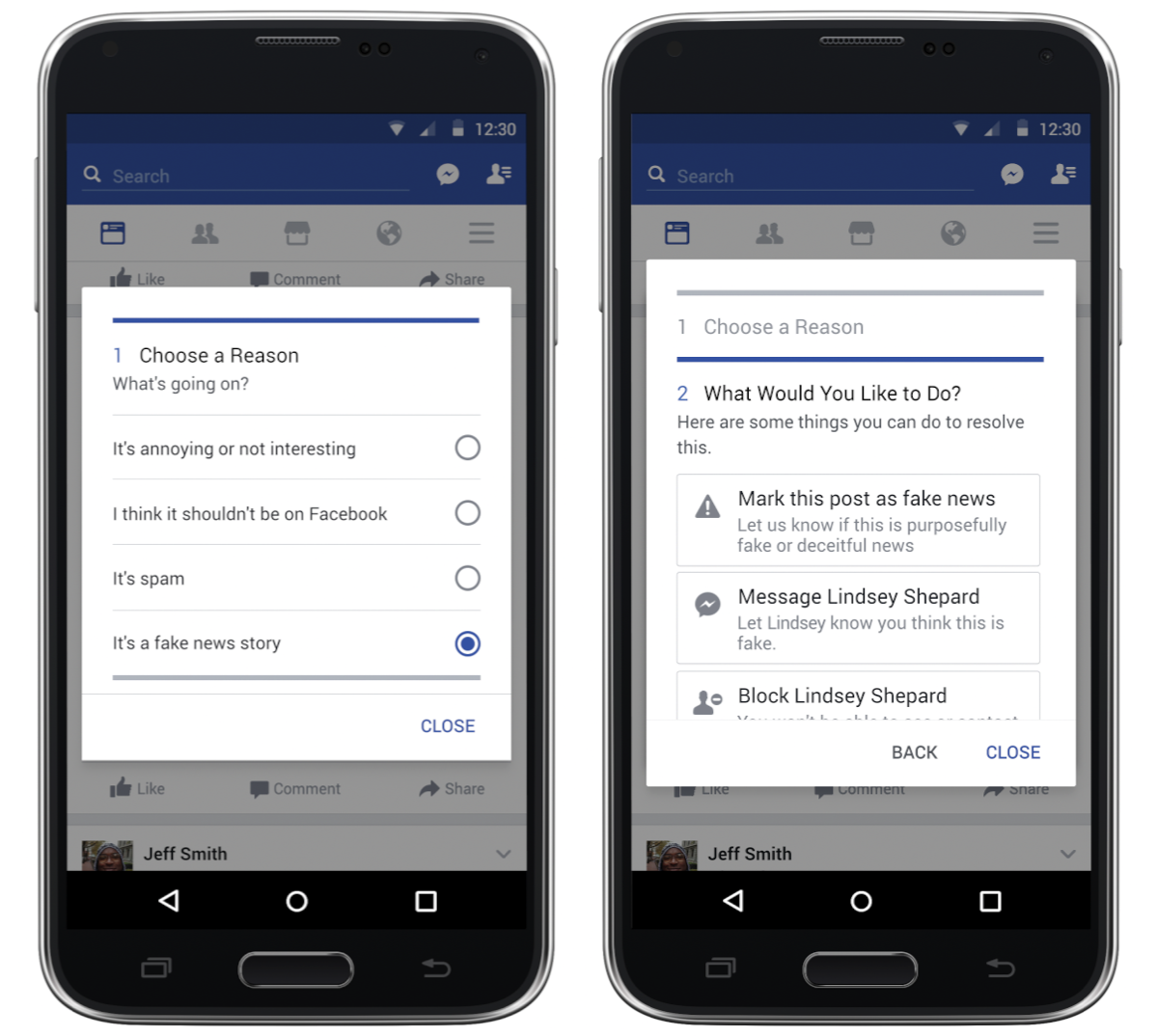

It is unclear when, but Facebook says you’ll soon be able to flag news as fake by clicking the upper right-hand corner of a post. It may be live already. In addition, you can mark stories as disputed. Facebook says this measure provides more context to people and will help them decide what to trust and what to share.

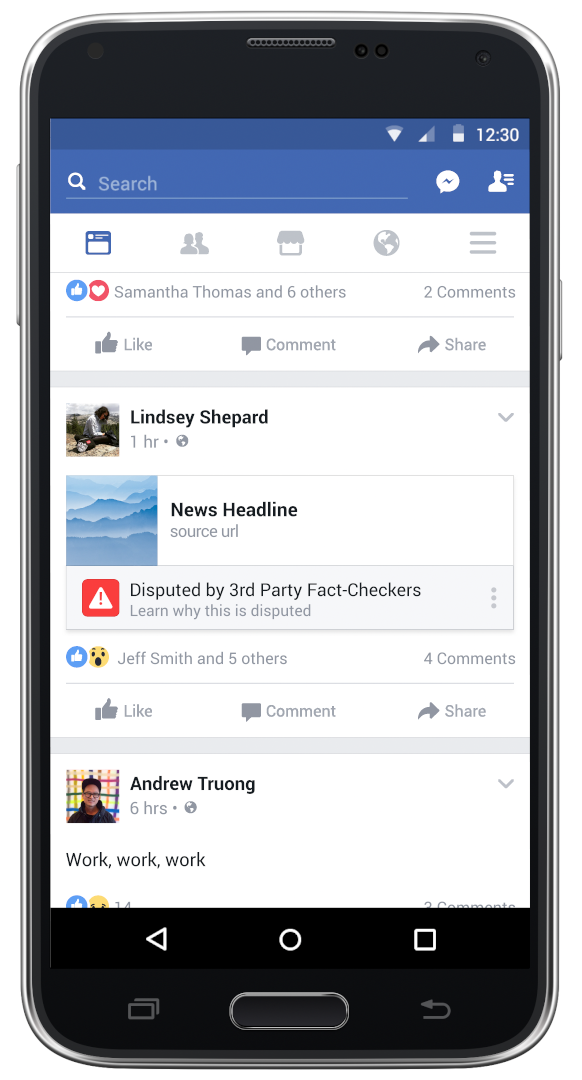

Facebook has started working with third-party fact-checking organisations that are signatories of Poynter’s International Fact-Checking Code of Principles (such as Poynter, Snopes, PolitiFact and ABC News, among others), and what you flag will be sent to these organisations, who will identify it. In the case that it is found to be fake, the news story will be flagged as disputed, and there will be a link explaining why. Disputed stories will still make it to your news feed, just lower. That’s rather interesting.

Since spammers who spread the fake news were also motivated financially, Facebook is changing its ad policy to reduce such incentives. What do you think? Is this enough?

Want to see more of our stories on Google?

P.S. Want to keep this site truly independent? Support us by buying us a beer, treating us to a coffee, or shopping through Amazon here. Links in this post are affiliate links, so we earn a tiny commission at no charge to you. Thanks for supporting independent Canadian media!

So nothing by CNN will ever be reported on FB again? Awesome

^ Comment marked as fake ^